Every AI coding assistant I've used locks you into a single provider. Want to use Claude for architecture discussions and GPT-4 for quick edits? Start a new session, lose your context, repeat your explanation of what you're trying to build. This release fixes that.

True Model Agnosticism

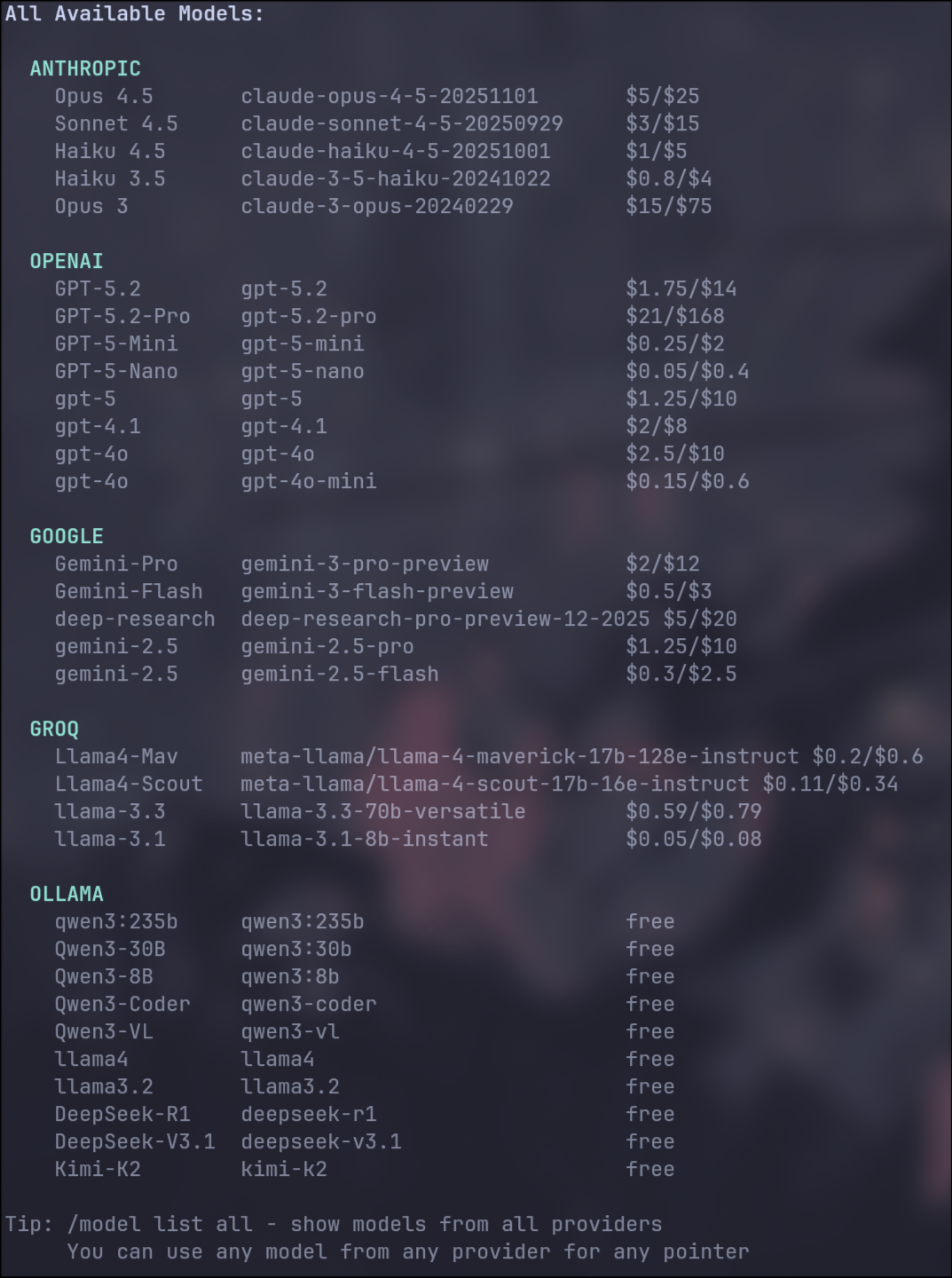

REI now maintains a provider-independent conversation history. Switch from Claude to GPT-4 to a local Llama model mid-conversation, and the context follows. The translation layer handles the differences in how each provider represents tool calls, system prompts, and message formats.

The implementation is messier than it sounds. Different providers have different token limits, different ways of handling images, different expectations for tool invocation and result formatting. REI abstracts all of this behind a unified interface. Pick the model you want; REI handles the translation.

Metrics Dashboard

I've been flying blind on costs. A long conversation with a capable model can run up a surprisingly large bill, but there's no good way to see it happening in real time. The new metrics dashboard tracks token usage, estimated costs, and performance metrics across your session.

The dashboard updates in real-time as responses stream in. Exactly how your conversation is consuming resources, which models are generating the most tokens, where your API budget is going.

Edit Diffs in Chat

When REI modifies a file, you now see the changes inline as a proper diff. Additions highlighted, deletions struck through, line numbers aligned. No more squinting at before/after blocks trying to spot what changed. This is especially useful for reviewing changes before you approve them in POWC mode.

LSP Integration

Language Server Protocol integration brings IDE-level intelligence to REI. TypeScript, Python, and Rust projects get semantic understanding: what types exist, what functions are available, what imports are missing. The Checker Agent uses this to catch errors that would otherwise require running the code to discover.

Code generation quality improves too. With LSP data, REI makes fewer hallucinated function calls and produces more idiomatic code for your specific project.

Architecture Improvements

- Prompt templates moved to external files, so you can customize REI's behavior without touching code

- Mandatory file read before edit, so REI can no longer modify files it hasn't seen

- Extended thinking support across all providers that offer it

- Prompt caching for faster responses on repeated patterns

REI isn't just a Claude wrapper anymore. It's a platform that can leverage any model, any provider, any local setup. The flexibility is already changing how I work.