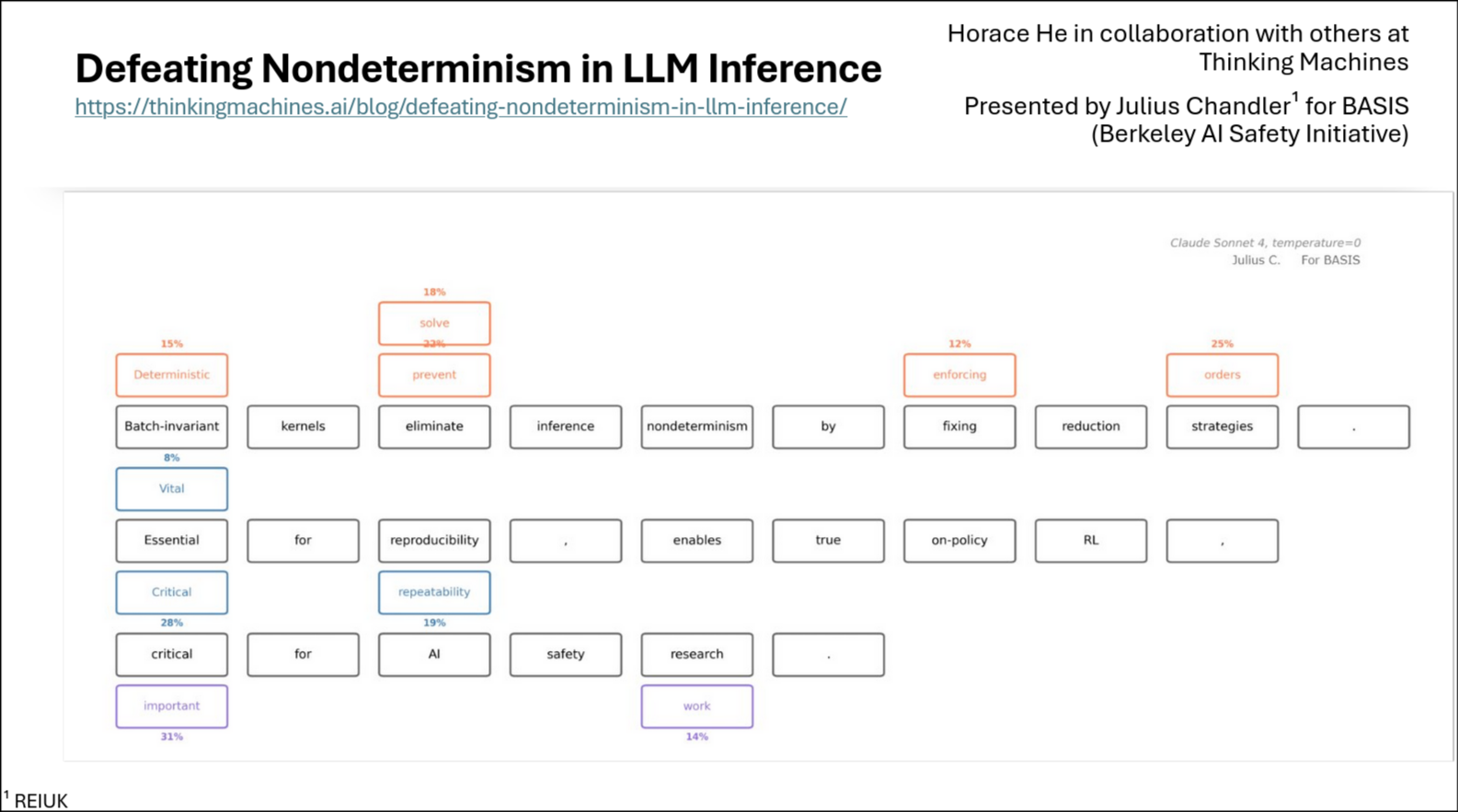

I gave a talk to the Berkeley AI Safety Student Initiative (BASIS) technical track scholars on "Defeating Nondeterminism in LLM Inference," a presentation based on research by Horace He and the team at Thinking Machines.

I enjoyed presenting to so many intelligent and interested young men and women. Slides are available upon request.

Why Determinism Matters

Reproducible outputs from language models matter for:

- Scientific reproducibility of research results

- Reinforcement learning training pipelines

- Testing and compliance in regulated industries

- Robustness evaluation and debugging

Even with temperature set to zero (which should make sampling deterministic by always selecting the highest probability token), LLM APIs still produce different outputs across runs. Frustrating.

The Common Misconception

Most people blame race conditions and floating-point non-associativity. The logic goes: GPUs perform calculations in parallel, thread order is nondeterministic, and floating-point math isn't associative (e.g., (0.1+1e20)−1e20=0, but 0.1+(1e20−1e20)=0.1). Therefore, results should vary.

The research shows this isn't the main culprit. Most operations are actually "run-to-run deterministic": running the same matrix multiplication twice gives bitwise identical results. The forward pass of inference servers like vLLM can be claimed deterministic. Yet from the user's perspective, results remain nondeterministic.

The Real Culprit: Batch Invariance

The actual source of nondeterminism is batch size variability. When you send a request to an LLM inference server, your request gets batched with other users' requests to maximize efficiency. Server load constantly changes, meaning batch size is effectively random from your perspective.

GPU kernels lack "batch invariance." When batch size is small, kernels parallelize within each row to avoid wasting compute. When batch size is large, each row gets its own core. Different parallelization strategies mean different reduction orders, and with floating-point arithmetic, different orders produce different results.

The Solutions

The research demonstrates batch-invariant implementations for three key operations:

- RMSNorm. Accept idle cores for small batches instead of splitting reductions across cores.

- Matrix multiplication. Pick one kernel configuration and stick with it. No Split-K optimizations that change reduction order.

- Attention. Use fixed split-sizes (not split-counts) and consistent tile sizes to guarantee the same reduction order regardless of batch size.

Results

Using batch-invariant kernels on top of vLLM, running the same prompt 1000 times: standard vLLM produced 80 unique completions, while the deterministic version produced exactly 1. True determinism also enables proper on-policy reinforcement learning without reward collapse.

The performance cost exists but is manageable, and for many applications, the tradeoff is worthwhile.